HI team,

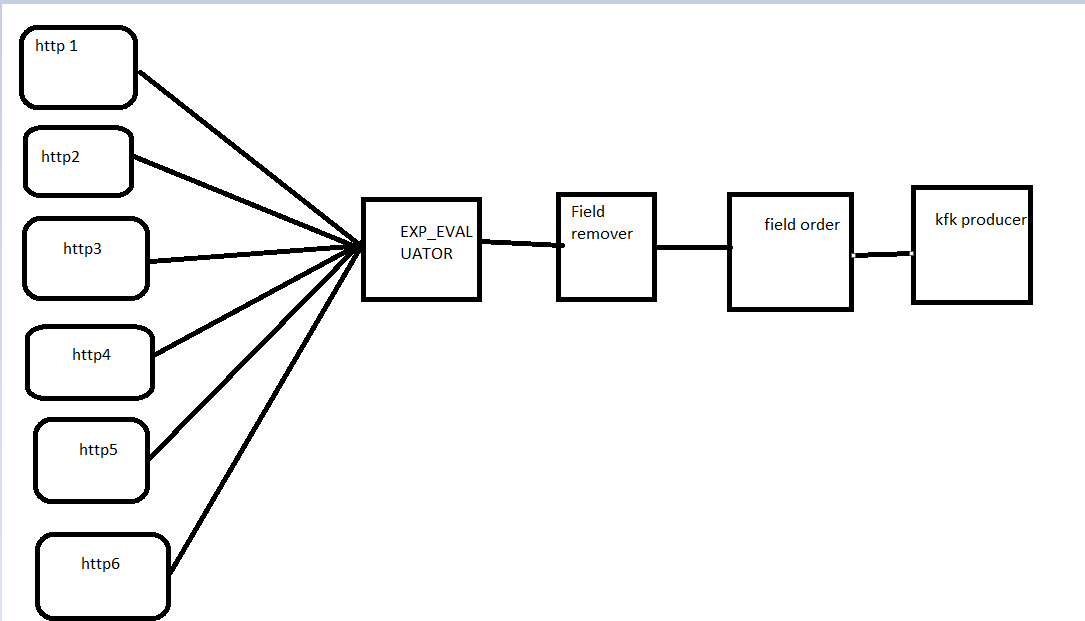

I am having 6 API’s where I have to build 3 pipelines for each i.e., API-KAFKA, KAFKA-FILESYSTEM and FS-Target table. Like this For 6 API’s I have to build and execute 18 pipelines (6*3). Could anyone suggest me that is there any approach which reduces the number of pipelines ( to push all 6 APIs data into 1 Kafka topic in 1st pipeline, after that I will generate data file from Kafka to FS and FS to target table).

FYI- my target table is same for all APIs.