Product: StreamSets Transformer

Issue:

Steps which need to be followed to get PySpark transformations to work on DataProc cluster

Solution:

PySpark jobs on Dataproc are run by a Python interpreter on the cluster. Job code must be compatible at runtime with the Python interpreter's version and dependencies.

You can configure your desire python version by following the below document from Dataproc Guide

Configure the cluster's Python environment

Note: The PySpark processor can use any Python 3.x version. However, StreamSets recommends installing the latest version.

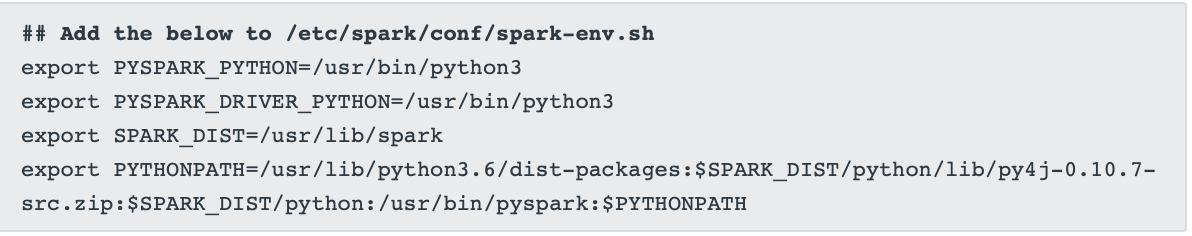

Now you have python available to dataproc cluster, you need to change your spark configuration to point to this python.

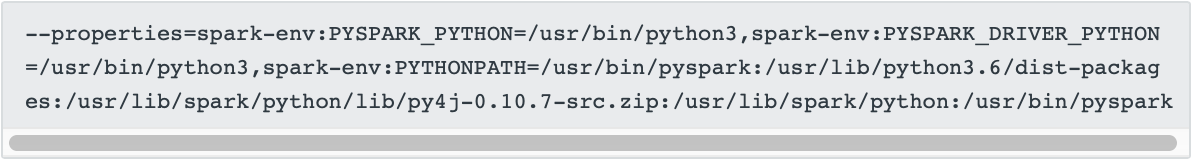

Or Rest equivalent option for dataproc